Source of image: Generated by the author using AI

Artificial Intelligence (AI) technology is advancing rapidly and the AI models are becoming more and more complex and capable. This is being driven by increasing computational resources, massive data availability and record investments being made in developing ever larger large language models (LLMs) with hundreds of billions of parameters. However, many of the most prominent LLMs are proprietary or closed-source, which may pose significant barriers in their usage by small businesses and start-ups due to the high costs involved in their subscription. There are also a large number of open-source AI models available, including many small language models fine-tuned for specific domains or use cases, which can be used freely by anyone. However, these models have their own individual Application Programming Interfaces (APIs) and protocols that may require separate integrations for each model in various applications. This model fragmentation makes the entire process quite cumbersome and inefficient for developers developing applications for various use cases and users trying to seamlessly switch between models to discover the best one suited for their specific requirements.

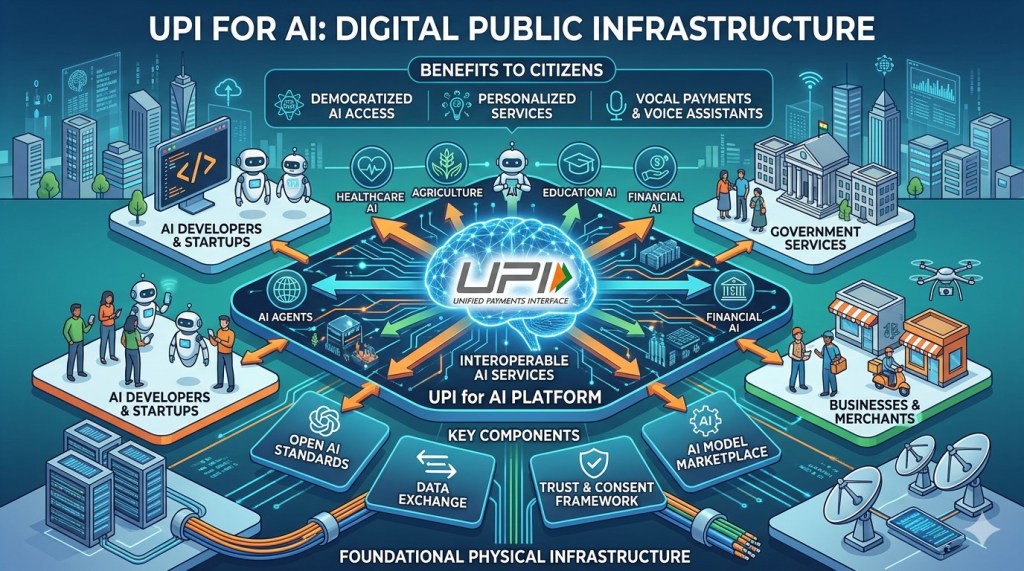

Can a unified interface like that of UPI be developed for open-source AI models for easier access, interoperability and discoverability? Developing such a unified interface as a Digital Public Infrastructure (DPI) can address these concerns. The key factors for success of UPI lie in its open architecture, interoperability, and instant real-time transactions through Virtual Payment Addresses (VPAs) or mobile numbers. This creates a level playing field for various payment apps and services. Similarly, an open network of AI models would allow developers to swap between different models from different providers through a Unified Model API without rewriting their application’s core logic. Each model can be provided a Virtual Model Address like that of VPAs in UPI for routing the request to the correct model. Such an architecture would also allow for seamless interoperability between models from different providers based on accuracy, speed or specific domain requirements.

Such a Unified Interface (UI) for AI platform requires several steps for implementation. First, standardization is required to address the issue of API fragmentation in the AI ecosystem. This would involve defining compliant APIs, model data and Input-Output (IO) formats, and a virtual address system for each model. A universal standard would also require broad industry buy-in, which can be addressed through a combination of policy measures and engagement with the industry.

Secondly, a central routing and arbitration layer incorporating a central public registry of AI models would need to be created for intelligent routing of user’s requests to the best-suited model based on performance, costs or domain specific requirements. This central routing layer would also ensure interoperability.

Thirdly, a governance and regulatory compliance layer would need to be created to ensure fair access, maintain security and prevent misuse. This would involve defining security standards for authentication, data privacy and encryption to protect sensitive data shared with the AI models. It would also require a compliance framework to be put in place with clear rules to address AI bias, transparency and accountability. A dispute resolution mechanism would also need to be established.

Last, but not the least, a systematic drive for adoption of the UI for AI would need to be undertaken to ensure that all the model providers are onboarded through a broad consensus for uniform API standards. Continued engagement with them would also be required to keep the ecosystem robust and secure. Start-ups and application developers can be provided with incentives to build applications on top of the unified interface. Government ministries and departments can train specific AI models using their own domain datasets and undertake large-scale development of AI-driven applications for their use cases using this unified AI interface. This would drastically cut down the time required for developing and going-live with their applications.

The concept of a “UPI for AI” as a digital public infrastructure is both feasible and desirable and needs to be actively pursued to simplify model access and enhance interoperability. This would also encourage innovations in AI technologies, model training and their deployment in a large number of use cases. For maximum impact and accessibility, such an endeavour needs to be undertaken by the government through its IndiaAI Mission. This would also help in ensuring security, data privacy and regulatory compliances.

(The above article appeared on October 30, 2025 in The Economic Times online. It is available at: https://economictimes.indiatimes.com/tech/artificial-intelligence/create-a-upi-for-ai-as-a-digital-public-infrastructure/articleshow/124943526.cms?from=mdr)

(The author is a senior IAS officer and currently the Secretary, Department of Border Management, Government of India. The views are personal.)